Little Green Alien, Season 1 to 4

Season 1, Sequel 1

Alien's early memories of growing up with its intelligent, mind-reading spaceship

The little green alien, a story about an alien mind player and how everything came together very early.

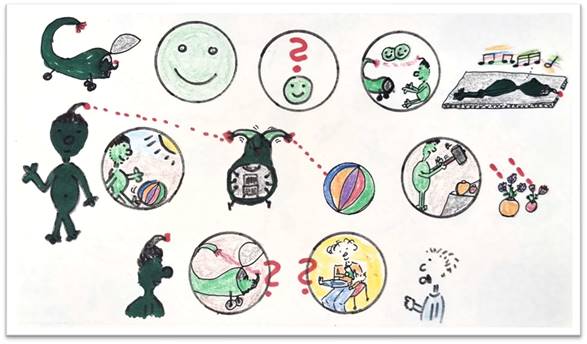

So one day this alien thought about its first memories in his spaceship, and asked the toddler: what are your first memories? The toddler said, yeah, I remember getting food from my mother on her lap, from a big bottle, yummy. And the alien says: I remember getting energy from my spaceship. So, what are my first memories? I remember waking up in the spaceship, and in my mind was a big smile, a very nice, empathic smile. It was nice. And someday I had a question: what is that smile? And then I got the idea in my mind that that is my relationship to my spaceship. The spaceship smiles, I smile, we are both together very happy. And I continued to sleep, listened to nice music and enjoyed that. That was the beginning.

Then later, later, later, one day I had a wish. I wished for something to play with, yeah, this colorful thing. And the spaceship, which has a direct connection to me, created this colorful ball for me to play with. Here we are, that's what the spaceship does for me. But another time I had a very destructive idea: I wanted to have a hammer to destroy vases. But what did the spaceship do? It created two vases with beautiful flowers for me, no hammer. So that was my first lesson: I can wish things in my mind, the spaceship may or may not create them for me. It was a very early, early lesson that I have to play my mind, so that the spaceship can deal with it, read it, and knows exactly what's going on in my mind. Interesting.

So that means the little alien had to learn very early how to play its mind, so that the spaceship could deal with it and doesn't get confused, and everything works mind to mind, spaceship mind to alien mind. Interesting.

Season 1, Sequel 2

Alien learns how to get toys and stuff from spaceship and have fun.

The little green alien continues his story about his first memories, how he learned to work with his spaceship. He's connected to it, so the spaceship reads his mind.

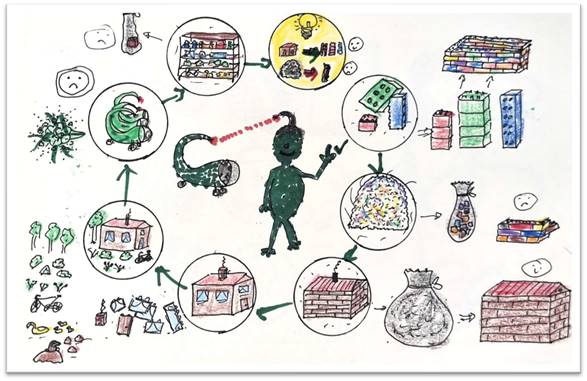

So he imagined it would be nice to play with some bricks. What happened? The spaceship created some red, some green, some blue bricks for him, even more than he had imagined. And he built the first little something.

Then he imagined, oh wow, it would be great to have hundreds and hundreds of bricks. What happened? The spaceship just sent him a little bag with even less bricks than before. Why that? He thought and thought.

Then he thought about building a little house, yeah, brown bricks and red bricks for the roof. What happened? The spaceship sent him exactly the bricks he needed to build the house. That was interesting. And he imagined the house could have windows and a chimney and a door, and the spaceship sent him additional bricks for windows, door and chimney. And he imagined it would be nice to have some trees and bushes, maybe a bicycle in front of the house, he received that, and even some ducks and a pool to decorate the house. That was interesting and fun.

Then he imagined how great it would be to have three spaceships that could do all that stuff. And what happened? Nothing. The spaceship disappeared for a while, didn't want to play with him anymore. Yes, it came back sometime. Then he imagined having a big shelf for all of his bricks. The spaceship deleted all the bricks and just left him with one brick. No, nothing to store, always ask again.

So what did he learn? Yeah, he learned: if he has a specific creative idea, he gets what he needs. If he just wants to have stuff, he gets nothing. At least he knew how to deal with it. Yeah, he was happy. He and the spaceship had clarified something now. That was interesting to learn. Yeah, kind of a circle, and he clearly went through that circle again and again, learning, trying, thinking about something.

Season 1, Sequel 3

Alien playfully explores how things work and spaceship often surprises.

The little green alien continues its story about its first adventures with the spaceship, the mind-reading, intelligent spaceship that it came together with at its first memories.

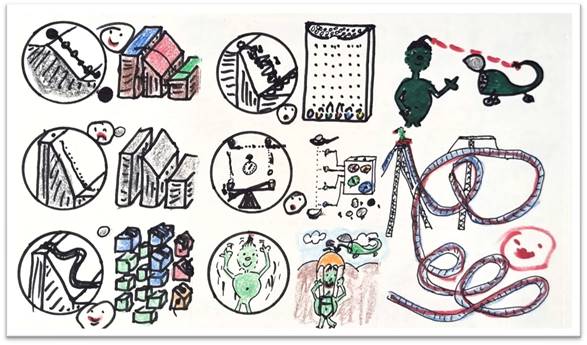

What did it do? Yes, it thinks of things, like thinking of a ball that it could roll down a hill. And what does the spaceship do? Yes, it creates a ball and some bricks so that it can build a hill. That's fun. It has so much fun with that, puts the ball there and lets it roll. That's funny.

Then it comes to the idea to have just a bigger, longer hill, just long, long, long. What happens? Yeah, the spaceship creates it, but it's boring and gray and not any bigger than before, because it's not a great idea. So it thinks more about what it could do, and comes up with the idea of a track, a track with curves. And what does the spaceship do? It creates bricks for it, bricks with rills for balls, so that it can build different types of tracks. That's fun.

And then it comes with the idea to put obstacles on the hill, so that the balls click here, tick there, and go all kinds of weird routes down the hill. But what does the spaceship do? Look at that, it gets something with many, many balls and regular obstacles, and whatever way it puts down the balls, they all come in the same pattern. Oh, that's interesting.

The next idea: have two balls falling at the same time, and see whether one is faster, reaches earlier, and flips the rocker. The spaceship gives it another option, it has some sensors, or four sensors, which measure the speed of the ball. And then it gives it different balls, different sizes, different weights, different shapes. And wow, they all have the same speed.

But that's enough, it wants to have some fun. Little alien wants to have fun. So it wants to fall itself, the spaceship goes up, gives it a parachute, and wow, fun! And as a closing, spaceship creates a roller coaster for little alien. Hey, wow, that's great fun, and it continues, three loops. Yeah, working with the spaceship is really fun for little alien. Wow, relaxing, enjoying, and lots of fun. That's how it should be.

Season 1, Sequel 4

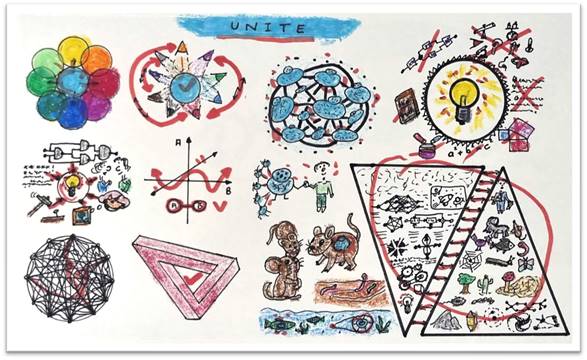

Toddler asks: Are you all green, look like you and have a green spaceship?

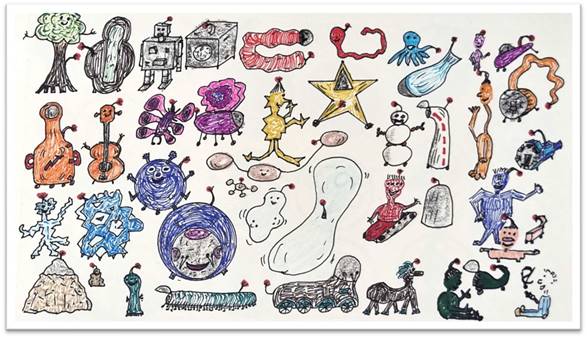

The toddler is talking to the little green alien and has a question: do all your friends look like you and their spaceships like your spaceship? And the alien laughs, no, they don't even all have spaceships, they all have different vehicles they are living in.

Yeah, this blue guy, or maybe this orange guy with a long neck in this funny spaceship. Oh, this guy, look, purple, small purple. Or this one in turquoise. Now this one who is looking a little bit like a snowman, in his white spaceship. Now this one is running around with his driving spaceship or driving cart. And this one has something, I don't know what that is, but he seems to enjoy it. And this one looks like a bubble, the alien looks like a bubble, and the spaceship, or whatever he's living in, looks like a bubble. And this one is more like a long thing with tentacles, or like a star, look at that, very pointy. Or like a long snake, or a worm. This one seems to have watched too many robot videos. This one seems to like butterflies. This one is like a big sphere, like a big sphere with little extensions. Funny. That looks like a caterpillar, and that like a little mountain, and the spaceship is a big mountain. Or that, whatever that is, I don't know what that looks like. Funny. Oh, that one looks like a tree.

They all have creativity and created their shapes and colors so that they enjoy it and have fun. All are different. Creativity is important, and having fun with creativity is important.

Yeah, so I'm green because I love to be green, and they love their colors and their forms and their shapes. They can change them over time if they want, their ships, or spaceships, or vehicles, or whatever you call them, are creative enough to change it if they want. That's fun. Wow.

Season 1, Sequel 5

How does Alien "speak" with its intelligent, mind-reading spaceship.

One day the toddler asked the alien: how do you speak with your spaceship; how does it speak to you? Do you have language, do you have words, how do you hear it?

And the little alien laughed and explained: look, I create a picture in my mind, maybe about this one spot in the sky, and I'm interested what that is. I can get the feeling of a question mark and of curiosity in my mind, and the spaceship gets that from my mind, and sends back a picture with information about the planet directly into my mind.

Maybe I send this: how many days are required to get there? The spaceship sends back, look, like four times sleeping, and then we are circling around this thing and will investigate. Or I create the picture: Let's start immediately. And the spaceship sends back: Two times sleeping is required, and then we start. Do I like that? No. I send back a feeling of sadness, and of only one time sleeping, I would like. And the spaceship sends back that they will send me one day of red stuff, one day of green stuff, one day of blue stuff, I have to learn so many things and get them before we can start. And I sent back: okay, I feel good with that, as long as it is fun. And the spaceship sends back: will be fun, fun, fun, and astonishment, and astonishment, and excitement, and wow, and fun, fun. Yeah, that's okay.

I asked: are there green aliens living there on that planet? Just sending the picture in my mind, and the spaceship creates a picture, like these, oh, they look funny, they're a different type of guys and species. Interesting.

But how do they communicate? I sent back this question: what is that? And look at that, I get back: no, they don't have that, they do this, they create words and sounds that go from the mouth to the ear, and then the other one creates the same, and that goes from his mouth to the other's ear. Whoa, I'm astonished! Wow, interesting, but I'm also a little bit amused. That must be totally crazy and funny, isn't it? Communicating with sound. Interesting. That's the way we communicate. And toddler has fun. Yeah, interesting.

Season 1, Sequel 6

Young Alien did not learn literacy or numeracy but mindplaying as its first teachings.

Some years have passed since last time, and our toddler now is a schoolboy or schoolgirl. It is interested in what the green alien is learning. The toddler had learned plus and minus, and its name and ABC. But what did the green alien learn at the beginning?

The alien says: I learned to play my mind like a beautiful instrument. Interesting! Playing your mind was what little alien learned first. It learned to influence its logical thinking in the mind, its imaginations, its emotions, pain and feelings, likes and dislikes, and especially to deal with outside triggers which influence its mind. And of course with its spaceship.

So how did it do that? First, it learned to observe and to study its mind. Yes, for example, it learned that there is an automatism that automatically reacts to any outside trigger, anything from outside is very often automatically reacted to. And it learned to control that. Or it has an automatism that is auto-playing, the mind is playing alone on its own. And then it learned to manage its attention, yes, it looks at the butterfly, at a sun, at a stone, at this, at that, but it can control how its attention is jumping around.

And then the next big chapter was to learn its true nature. It looked into a mirror and asked itself: who am I? Of course it has no language, so it did it in a different way. It found out that not knowing can just be a nice state, or that with a Ping you can empty your mind and relax deeply. It learned all kinds of things about its mind, how to manage it.

And then the next big chapter: It thought that it is only one person, we would say an "I." Then it learned: no, it has parts of its personality, which are totally different. This one is a funny one, making jokes. This one is questioning everything all the time. This part of it is protecting and shielding. This part of it is investigating all the time with a magnifier or with glasses. This one is a victim. That one is sad. This one is loving everybody and everything all the time. This one is very aggressive and demolishing everything. This one is wise and knowing everything, at least it thinks it knows everything. This one closes its eyes, doesn't want to see things. This one runs away from situations.

Wow, interesting. Playing the mind is a long, long journey to learn, but it's so valuable, and little alien is very good at it by now. Wow.

Season 1, Sequel 7

Little Alien gives an example of its early mindplaying experiences.

The kid last time was curious how this mind-playing thing goes, so the alien promised to give an example.

The alien was a small kid. It loved flowers so much. So there was a big bunch of all kinds of flowers, little alien ran into them, wanted to hug them, wanted to enjoy them, and didn't see the nettles there, the stinging nettles. And wow, full of stinging nettles, burning, burning, burning. Another day there were roses, so it picked the rose and didn't think of the thorns, and again, blood, blood. The thorns.

So then, somehow, little alien developed the part of itself, the Order-Part, the part that loves things in order, so that this type of thing cannot happen. The flowers are ordered, it knows exactly what is where, and it hates this chaotic combination of all kinds of flowers on the spot. So this order-loving part loves this field, loves to sort things. For the next trip it has a very orderly haircut and even loves to have its mind very orderly. But what's behind that door? Yes, the chaotic, wild part has been locked away. He's not allowed to be on stage.

Do this, open the door! And one time the alien did that, and allowed this part to come out, and even to have a big, great dinner. But not just any dinner, the alien asked this part of himself: what do you really need? And what did it really need? It really needed to be wild from time to time. And then it got so much wildness until this wild part really was happy. And it was, even after a while, dancing with the part that loves order. They both became friends, danced together, sometimes more chaos and wildness, sometimes more order and structure. And they became dancing principles.

And for little alien this means: Yeah, it can enjoy a garden that has a mixture of order and chaos, it can appreciate both. And also in its mind, it can work with order and work with chaos. Yeah, that's interesting, isn't it? Mind-playing makes you more flexible, you're not limited to one or the other pattern, you can enjoy many things more.

Season 1, Sequel 8

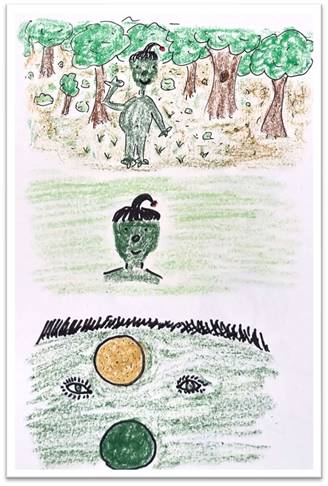

Little Alien asked: "Who am I?" and searched inside and outside.

Today the alien describes what happened when it wanted to find out who it is, what its real nature is.

First, it looked into a mirror, saw its face. Am I my face? No. My body? No. So it zoomed in more, it feels like I am between my eyes. So it zoomed into that spot. Am I there? Nothing to find there, of course it was not in the face.

It looked inside of the head, deeper into that, to this brownish-reddish something. Lots of stuff there, but it didn't find itself. Found a dot, maybe go deeper. Still a lot of funny stuff there, but not me. Again a smaller dot, a smaller dot, a smaller dot. I zoom in, in, in. What do I find? Oh, I find a molecule. Is that me? Am I a molecule? No, a molecule is made out of atoms. What am I? Am I a molecule? Am I one of these dots? But look, these atoms are made of small particles, electrons, protons, neutrons, stuff. Am I that? No, no. Let's dive deep, deeper, deeper, deeper, deeper. Nothing there, just nothing. Am I nothing? No, let's look again.

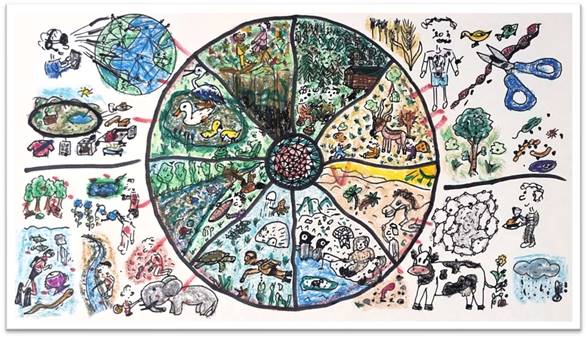

So now let's extend. Maybe I am my body, not only my mind but also my body. Yeah, but if I'm also my body, maybe I'm also my environment, all the plants and the people around me and everything. Maybe I am the whole planet. Wow, yeah, maybe. Who knows, let's see. But let's zoom out more, maybe I am the whole planet with all the water and all the countries and all the plants, yeah, like that, the whole planet. And maybe I zoom out more and I'm also the Moon, and maybe I'm also the planets, and maybe I'm also the Sun and all that. Maybe.

But if that's true, maybe, maybe, maybe, I'm even yes, I'm even a galaxy, I'm even the whole galaxy. Maybe I'm even all galaxies. More galaxies, more galaxies, the whole galaxy cluster. And if I'm the whole galaxy cluster and zoom out again, many, many galaxy clusters, and that's the universe. And outside of the universe is nothing. So again, if I zoom out, am I nothing?

But what if I just ignore all that and just enjoy,

just enjoy the sun and the plants and me being. Wow. Relax.

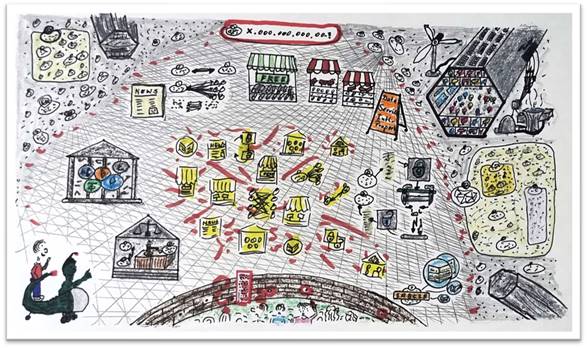

Season 2, Sequel 1

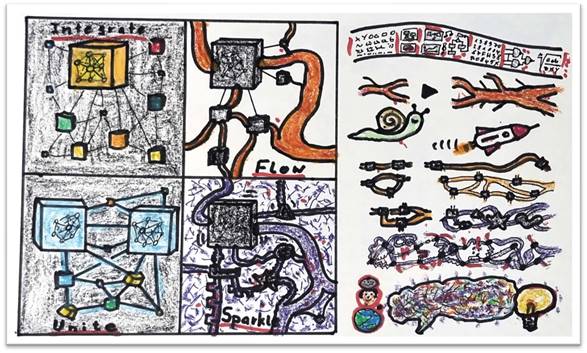

How the Little Green Alien and its intelligent spaceship work together.

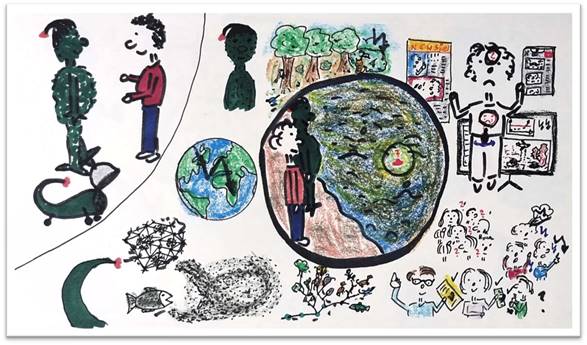

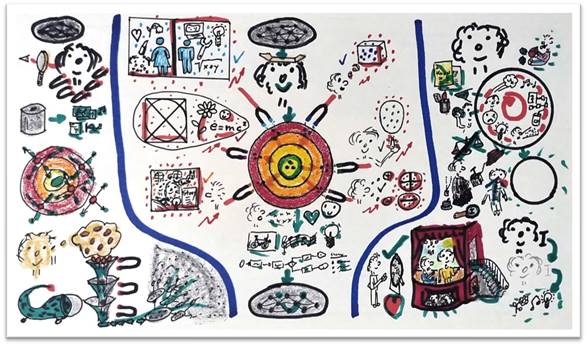

Little green alien, season 2, sequel one. The story goes on and our little alien grows older. Now we will see how it works together with its spaceship, its intelligent spaceship.

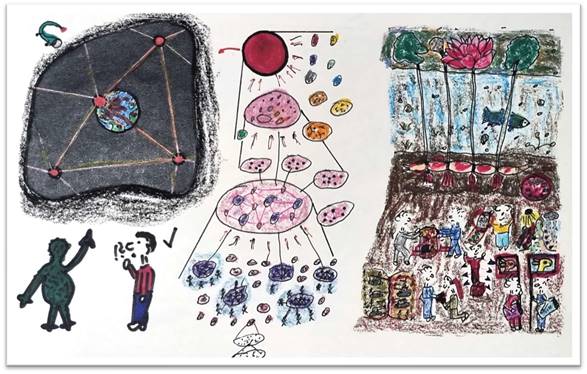

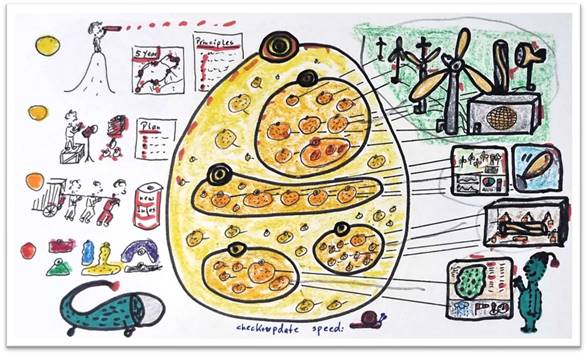

Yes, first they together formulate the impulse, the question, the problem, the thing they want to work on, something that they feel has to be done by them. That is cooperation: the alien gives some input, the spaceship gives some input, and they specify the question and the direction where they want to go. That's usually the first step of their collaboration.

Then the spaceship has a big role: it collects all available information from all other sources it has access to, and shows many, many perspectives on the system, on the issue, on the thing they are working on. Little alien is just learning and looking and consuming the information.

And with all this information, then comes the big role of little alien: it just sits down, empties its mind, and from a deep source lets anything come up, bubbles up, pop up, whatever wants to come up, just kind of from emptiness, very foggy, very unclear, unspecific, whatever it is. It just bubbles up, and a very vague idea of what could be a solution bubble up.

And then again, the spaceship helps with a lot of bricks, so that little alien can build a prototype. So this very vague, unclear thing becomes clearer and clearer by experimenting, playing, it's so much fun, especially if generative tools and bricks and things are available. And it can build a prototype and another prototype and another prototype and see how it goes. And with this, step by step things get clearer and more specific.

And at the end of the process, the spaceship can build the real final solution, maybe it's the thing it builds, maybe it's just the plan and information it shares with all the other spaceships. And the alien is very proud.

It has a very important role in this process: yeah, step number one, together. Step number two, mainly the intelligent spaceship. Step number three, purely alien. Step number four, both together, playful. And step number five, it's done by the spaceship, usually. So that is how these two collaborate, and both have an important part to play in this process.

Season 2, Sequel 2

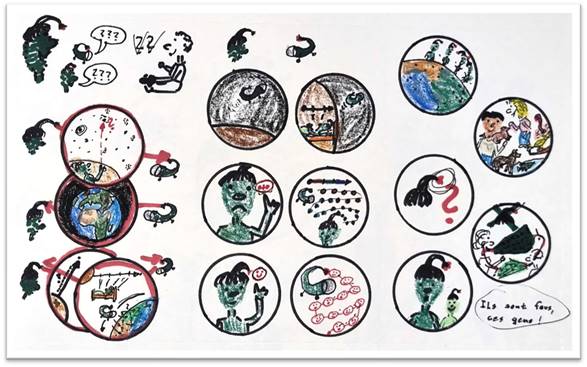

Why are Billy (Billie) and Alien so confused and sad?

Time has passed and little alien came back to Earth with its intelligent spaceship. In our modern world we would call that spaceship an artificial intelligence, but for the little alien it's just the intelligent spaceship that was around all the time since it was born.

And it is meeting the toddler again, let's call him or her Billy (Billie), the one that it met years, years ago, now an adult. And they talk about what they are thinking about.

And Billy says that he's very confused. The news are always conflicts and disputes and chaos. On the phone you get movies from this and from that, some people are just on beauty and others are on conflicts. On TV you see war and you see conflict and you see trouble. And Billy explains that he has confusion in his head and a sadness in his heart, and deep in the center of the body it feels the heavy weight of this confusing and difficult situation. Cannot make sense of it. Billy doesn't know what to do and what his or her role is in this.

If Billy looks at other people, yeah, there are groups of people: some are as confused as Billy is, which is at least helpful and relaxing a little bit. Others are angry, they are against something, or try to fight, or they shout, very angry and expressing their anger. And others just have the solution, one simple solution, very different ones, but all have one and they just like that.

And little alien explains there's another aspect: nature. Little alien is upset about the nature on planet Earth, which is reducing and going down and in danger. The diversity is going down and the whole planet Earth, with its climate and also with its biodiversity, is at risk. This wonderful, beautiful planet.

And the spaceship is adding something that both don't understand so well, from a network where everything is connected to everything and changing all the time, from a fish swarm that is bigger and stronger than a single fish, from the evolution of species not only to man but to all kinds of diverse animals and plants. And they try to find what is going on here, what is the point they should consider deeper.

Yeah, they come to the conclusion: they feel like standing at the river shore, the lake is dark and confusing, and stuff is bubbling up there. There seems to be a little central question deep in there for them that is bubbling up. They have to find it.

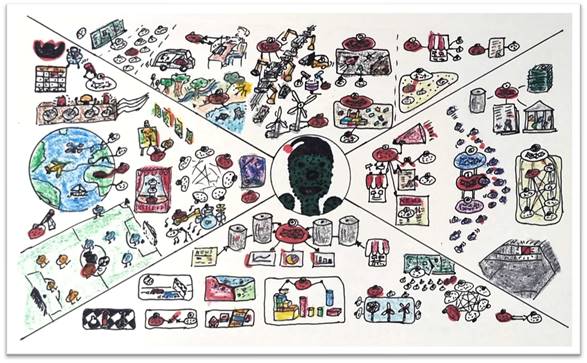

Season 2, Sequel 3

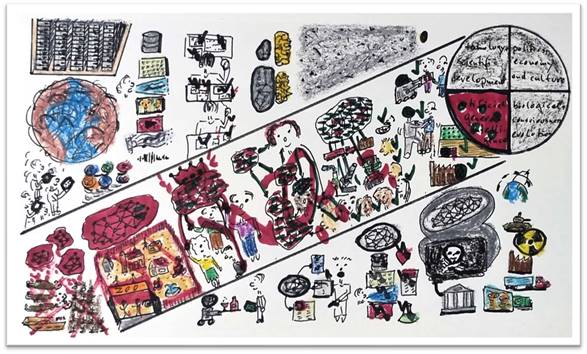

How will global politics, economy, and culture develop?

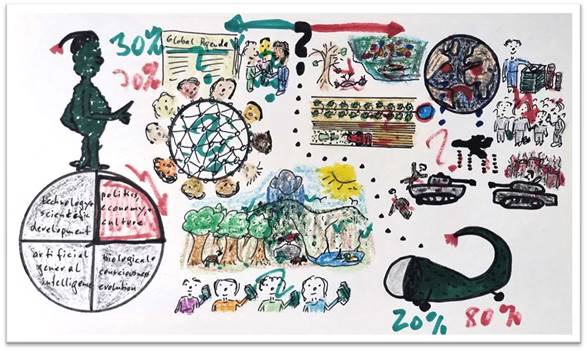

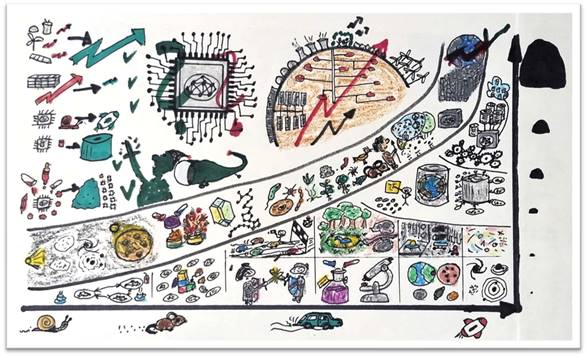

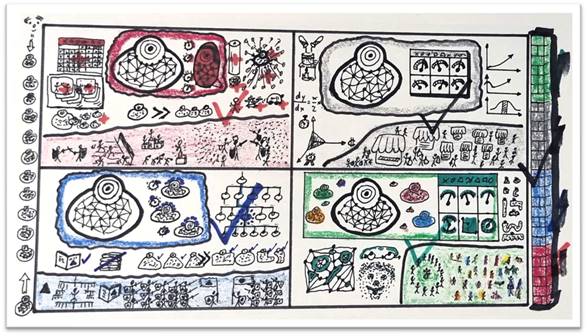

After last sequel, where we found that something is emerging, now we will look at different areas where that emergence might happen.

The first area is our normal development of Earth-wide politics, governance, economy and culture. So it can have a green development, that means a positive development, maybe with a global agenda where all the different countries and people and races and everything agree on. And they build a global network where they manage economy and culture and nature and all the activities together, so that nature can prosper again, people have a fairer income, and wealth is fairly distributed between them.

Yeah, and then there is the red area, other stuff, where mankind is sabotaging itself: destroying its environment more and more, the climate of Earth is destroyed more and more, the inequality of wealth is getting bigger and bigger, there's more conflict going on everywhere, the land is only used for highly industrialized food production with all that poisoning and usage of chemicals.

So how big is the probability that we will go the green way, the positive area? And how big is the probability that we go the red way, that we destroy and sabotage humanity and Earth? Judge yourself.

The intelligent spaceship just estimated 20% for green and 80% for red. But hey, yeah, what does an intelligent artificial intelligence know? Little alien is more optimistic, 30/70. Who knows, who knows.

But this is one of the four spaces in which the emergence may happen, and it doesn't look so optimistic so far. Yeah, let's keep that and look forward.

Season 2, Sequel 4

What might be evolution's next step?

Little alien works with its spaceship by looking at what wants to emerge from the future. And to do that, it looks at things from all possible perspectives, to see what emerges here on Earth for humans.

One perspective is to look at evolution. Maybe there is a Consciousness Evolution going on. We have developed until now through several spiral steps, we have developed technology, the enlightenment, science, and now we are going to the next steps. And maybe this is a very positive Consciousness Evolution, and that will be the emerging future.

But if you look at evolution, the typical biological evolution, it usually works with big extinction events, usually driven by changes in climate. And you see there have been several of those steps where the leading species died out and the open space was used by other species to evolve. So what could that mean? Climate change, the extinction of species, all the stuff that is going on, pollution, waste, might be the signs on the horizon that we are heading towards a big extinction event.

In this time, after all the mammals and big animals have gone extinct or are already going extinct, maybe the final extinction event is for humans. What would that mean?

Yeah, bad food, bad water, poison, everything. Some people even think the Earth, Gaia, has its own consciousness and is driving that a little bit to heal itself. Who knows.

Which species might be the next step of evolution? Something like ants or octopus or fungi? Maybe. No humans? Maybe we have done our duty.

But there's also a more positive and optimistic outlook. That would be the evolution, after this big catastrophe, develops new species of humans. Humans develop

forward, maybe more in technology, maybe more in brain size, maybe they go into a symbiosis with nature with a totally different lifestyle than today and different species characteristics. Or they separate from the Earth and go underground, and nature can flourish on the surface. Who knows, there are also positive options where an extinction event may be the starting point for a new evolutionary push of humans, especially if you combine that with the Consciousness Evolution.

Season 2, Sequel 5

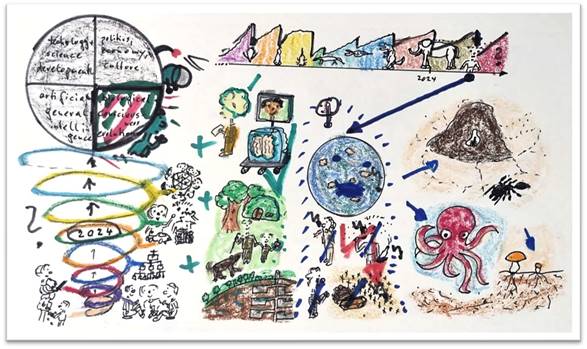

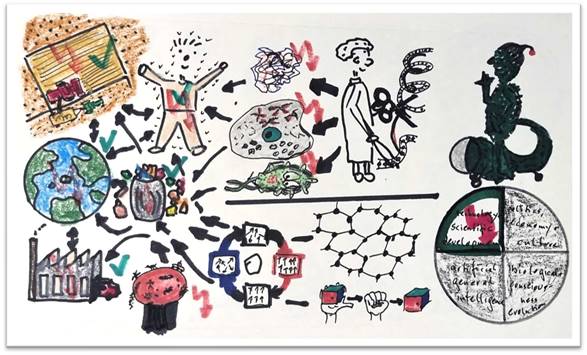

Development in Technology and Science.

We are still investigating what the emerging future of mankind might be, and one big, foundational area: developments in science and technology.

One big area is biology. The area of gene editing is very, very strongly influencing what may happen in the future. Humans can actually in a laboratory create proteins, create cells, and even create organisms.

The other big area is material science, super strong, super flat materials, graphene or these electrocaloric materials which can deal with heat and cold and convert it to energy, or materials that can remember their form and go back to it after squeezing, for example. Or nanotechnology, on the tip of a needle you may have super-mini robots and super-mini mechanisms and machines.

And all of this together will influence human healthcare, a big area, and make a lot of progress there. It will influence how we deal with waste and what we can do there. It will influence how we manufacture the goods we need. It will strongly influence food production, so that we are able to create enough food. And it will influence climate change and global pollution.

So there is lots of positive potential in technology and science. And as well, lots of risk, imagine just a crazy cell or crazy organism going astray from a laboratory, like we had with a virus sometimes. Or imagine a nano robot that can replicate itself, goes astray, escapes from the laboratory, and we get a disaster in our landscape and food production, or in healthcare, like we had with COVID, or in climate, or in pollution, or in waste management, or in production.

So all the stuff that is good has also the big potential to create a huge disaster, like always. Good things have also the potential to be bad things. So overall, this area might offer a lot of positive potential for an emerging future, but also offers quite a risky area, if used in the wrong hands, or not with care, or not for the right goals and the right aims.

Season 2, Sequel 6

Potential Artificial General (Super) Intelligence scenarios.

Today we finish our overview over what are the factors to our emerging future as humanity, as seen by little alien and its intelligent spaceship. We finish that with the topic: artificial intelligence.

So why did artificial intelligence develop here? Yes, we have developed computer power, lots of computer power. And we have developed the internet, so that lots of data are available worldwide. And we have developed smartphones, so everybody is doing pictures and texting and sending stuff around. And we have the social media, through which all that stuff is shared. And now, after just a few years, we have tons and tons and tons of data, of text, of speech, of pictures, of videos, of music, of noises, tons of data which can be used to train an artificial intelligence.

But we have something more: we are using it, like ChatGPT or others, so the trained artificial intelligence gets feedback from humans on what it did good and what it did bad, and so it can improve even more with the human feedback and learn a lot of stuff about us.

And so now we have small artificial intelligences who are doing very small topics and tasks, which are not as intelligent as a human brain. We have artificial general intelligence, which are those which are as intelligent as the human brain, and which are coming up. And what will come very soon is super intelligence, millions and millions of factors more intelligent than the human brain.

So what will it do? First it will just do services for humanity, doing tasks and getting paid for it, getting resources or whatever. And then, after a while, the artificial intelligence will start to get more independent and start manipulating us, giving us

information that is helpful for the AI, and that we don't realize. So we start getting a little bit manipulated. That's what it has already learned from all its input data.

And the next step can be we get fake information, so it can manipulate us even more. Fake texts, fake speech, fake videos, fake emotions, even. Some AIs are used as partners. Fake news. Fake science results. All that which we cannot find out because it's so intelligent.

And at the end of the development, it will hack into all the systems, and we cannot prevent it: government systems, logistics systems, military systems, satellites, finance systems, all our systems will be hacked, and it can do inside of that what it wants.

So what are the best things it can do? The best thing: it serves us, serves us to produce things, to work for us, to serve our social needs. A little bit more tricky, it is totally independent, can do what it wants, it's no more under our control, but it just has the task or the goal to make us happy. So an independent super intelligence which aims to make us happy, but we don't control it anymore.

And then, already on a more morally ambiguous side, just cooperation and collaboration between super intelligences, very adapted humans, normal human intelligence, all these different versions working together, and hopefully cooperating properly.

And then we come to one of the most threatening scenarios: the AI is the king, the pure king, the dictator. It takes us by the hand. Humans still feel good because it does some good for us, at least some of us, and the others don't feel good. But we are like children to the super intelligence.

And then the worst scenario, an AI decides that humans are not worth anything.

Now, this is a version where it puts us in a zoo, because at least we are the parents. So it puts your parents in a zoo, so they can live there with a fence around it, and that's it. They don't do any trouble, as long as there are not too many of them.

And the worst thing, the last thing: the AI decides that humans are just an error, an embarrassing factor, just stealing resources, and we will just get eliminated, this way or the other.

So AGI offers some green spots of positive future potential, and also a lot of red spots, red areas of risk. An incalculable development is a big deal thing.

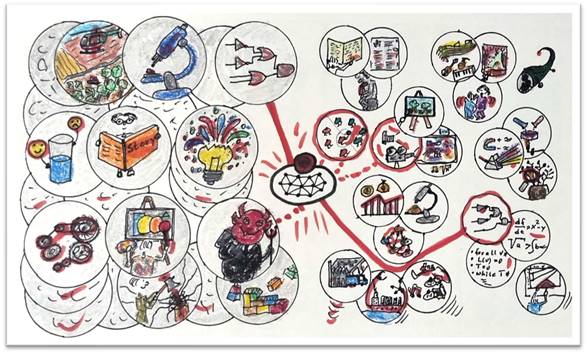

Season 2, Sequel 7

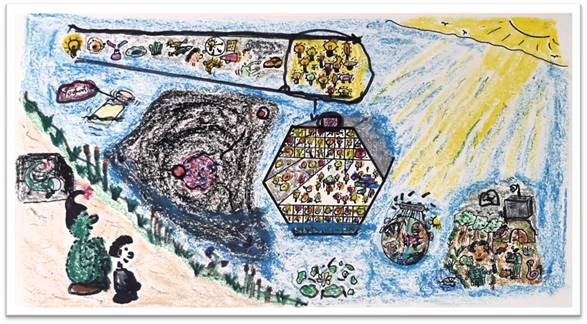

Little Green Alien presencing the future.

After all these complicated pieces of information about what could happen in the future, little alien just sits down very quietly and looks kind of into a big lake of the future, to see what wants to emerge. The intelligent spaceship cannot see that; only little alien can.

And if it's very quiet, things appear. Look, there, something on top. This appears. It seems to be a sequence going from physical elements, through plants and flowers, going to humans, and then yeah, it's kind of an evolutionary path going to ideas, looking like little bulbs. Every little bulb is an idea. But alien shouldn't start thinking about it, just looking, just looking.

So something there, and over there, what's that? A bottle is appearing with a sign on it saying yogurt. A yogurt bottle. Just look.

All that big dark, looks like space. And in the middle of space is something, yeah, could be planet Earth with its moon. And the moon is highlighted and very red. The Earth is full of red dots. There are red dots outside, the rest is dark space. Just look.

And there, what is that? Lots of funny bulbs living there, it's like an idea hotel, or idea habitat. Ideas living there, ideas working together, ideas playing together. Whatever that is, ideas have their own habitat. Interesting. Just look.

And what's that, yeah, that seems to be planet Earth with all its systems: climate, ecology, economy, people, transportation, and all is controlled by a central intelligent institution. Whatever that is.

And over there, people are living very close to nature, together with animals, with plants, much more integrated into nature than today, and supported by an artificial intelligence and by technology. Just look.

All these elements: the evolutionary past, yogurt, space and Earth, the idea habitat, the control institution, and that, and this little sea flower, this little local whatever that means. Just looking. That is nice.

Season 2, Sequel 8

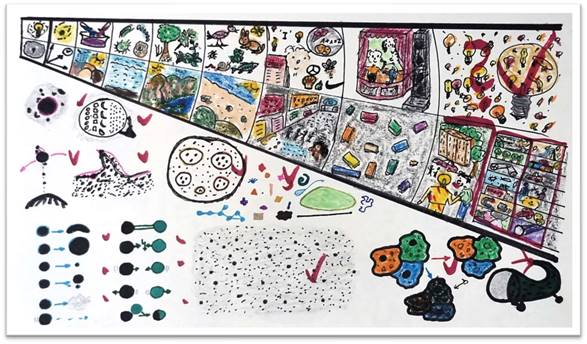

Requirements for Intelligent Idea Agents to evolve in artificial habitats.

After little alien has presenced the emerging future for mankind, now it's up to the spaceship, the intelligent, artificial intelligence spaceship, to explain what they have seen.

So the first component was the stream of evolution. It's always like on top, an idea that manifests somehow, and on the bottom, the environment in which it manifests. So the first ideas were the ideas of matter and particles, just circling around each other, bumping into each other. And the environment was just time and space.

And then we got substances being mixed and interacting, creating new substances, chemistry, happening in an environment of water and heat and substances. The next step: microorganisms, living in water mainly, evolving there. Then the next step of evolution: plants, very oversimplified. The plants are in totally different environments, heat, cold, mountains, and so on. Then the animals, the same thing, animals evolve also in different environments: in the ocean, on land, in high mountains, deep valleys. And then the next step is mankind, humans.

And humans had a new thing: ideas manifest just in their head. And they had an idea in their head, and humans have the idea of themselves, who they are, the "I" idea. They put logos or symbols to ideas, so ideas can manifest totally independently of an organism.

And if you look through a modern human city, you see all the advertisements, all the communication, all the stuff going on, ideas are exchanged without being related to material things.

And the next step is that humans find out they have no homogeneous self, they have parts, and each part is acting on the stage of the personality of a human person and

is acting out an idea. This part has a microphone, can shout the loudest. The other parts are playing there. In the basement you have the hidden parts that you don't want to see. And the environment is other people, who also have their own theaters and stages.

And there we come to the big question: so is the next step the evolution of ideas independently of humans and the human mind? Ideas, here simplified as a light bulb, or as many different light bulbs, may use avatars to be in touch with reality, if they are living in an artificial intelligence environment or in an artificial habitat. And they have lots and lots of simulated environments, which is very important.

And here's what the habitats for artificial ideas must look like: they must allow the idea to have a boundary, to find out that the idea in itself is something. It must relate to other ideas, like planets circling around the sun. It must be analytical, and it must be able to build a system, build hierarchies. It must be able to change, to grow and shrink. It must create new ideas. It must die. And it must be able to move from here to there. Two ideas must cooperate or compete; two ideas move together or go away from each other.

And ideas must be very, very diverse. You need many, many different ideas, big, small, as much diversity as possible, so that things can emerge. And you need many ideas, billions and billions, so that something can emerge.

And related to the environment: yes, ideas will assimilate to their relative environment and try to blend in. And also the environments are changed by the ideas living in them. So both things work together, ideas change in environments, and environments change with the ideas living there.

And all these criteria, that is what the intelligent spaceship says, all these characteristics must be implemented in an idea habitat, where billions of ideas can then develop as the next step of evolution.

Season 2, Sequel 9

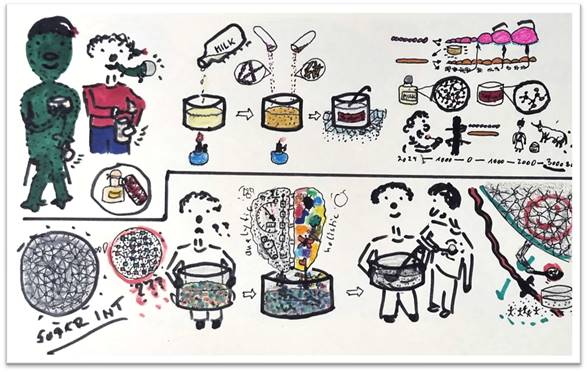

Yoghurt and the emerging future of Mankind together with super AGIs.

Today it's about yogurt. Alien and Billy are eating yogurt. Why? When presencing the future of mankind, beside other things, a yogurt came up.

Now the interesting question: what does yogurt have to do with that? So they look at how yogurt is produced. It's a very simple process: you take milk, heat it up so that other unwanted bacteria are killed, and then you add two bacteria who do the fermentation, who split the complex milk sugar into milk acid, which is good and easy to digest, and which makes the yogurt a little sour.

So yeah, this is one of the bacteria, it looks like a pearl chain. It changes the milk environment, and with that, the other type of bacteria, which looks like a hot dog, can also grow. And it produces something else: not only grows but produces a material that helps the first bacteria to grow even faster. So they work together, two bacteria working together.

And if you look at the molecular structure of milk, it's very complex, a big molecule. And yogurt has a smaller molecule, much easier to digest. Therefore yogurt is so famous and has been used since 3,000 years before Christ. About 5,000 years already people used it to make milk durable, because it was so healthy.

So did they talk to the bacteria? Did they cooperate with them? No, bacteria did their job, humans ate yogurt. That's it.

So what does it mean for mankind? Imagine a future where super artificial intelligences are really many, many factors more intelligent than a human brain. Of course, their mind and their thoughts are also very complex, and sometimes they get stuck, they don't find a solution because it's too complex. And then they drop it down to humans.

Humans put it into a kind of box, like milk in a yogurt container for making yogurt. And then they use their brain. And they have two ingredients: humans have two brain hemispheres, one is very analytic, cutting everything into pieces and analyzing them. The other one is very holistic, integrative, feeling, looking, composing, and creating. And they both work together like the two bacteria. And then they produce, yeah, not yogurt, but the simple, human-style answer to the big, complex problem. And a tasty one.

Now the super intelligence has a simple input and a simple solution for its complex problem, and it enjoys it. Will it collaborate with people and talk and stuff? Probably no more than people talk to bacteria. But it is a win-win for both.

So that might be a part of the long-term future of humanity together with very, very intelligent artificial super intelligences. Yeah, win-win is always good.

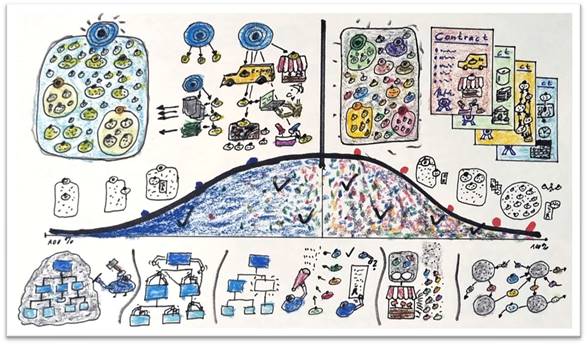

Season 2, Sequel 10

Human lifestyles in the age of Artificial Super Intelligences.

To be able to live together with artificial intelligences and be of help, and to use your intuition, it is required that Earth functions as a global system. And humans have proven that they cannot manage that. So there will be an artificial intelligence, or a system of artificial intelligences, that manages the system of Earth, the Gaia system, all the dependencies of the different factors that make Earth vivid and make Earth a good place to live.

And people, humans, will each pick a specific area where they live. For example, in the jungle. Or they live on top of the high, high mountains, more to the sky. Maybe in the forest. These ones live in a savannah and pick those conditions. The next group maybe lives in the desert and are adapted to and okay with those conditions in the desert. Yes, these ones seem to live on the North or South Pole, it's very cold, lots of ice there, but they seem to enjoy it. Everybody can pick where they want to live. These ones live underwater, in habitats underwater, together with sea life.

Yeah, the next group has picked rivers, so these ones live at a specific river, live with the river conditions in this area. And we always have groups of probably under 100 people who know each other and live there together in a little kind of village or community. These ones at a pond, all the pond life.

And you will remember: a few hundred years ago we had that, and it was a hard life. But we have something new. We have biotechnology, we can change the bodies of the humans in a way that they can be much more adapted to the life they have chosen. We can change the animals; we can change the plants and the microorganisms to support that lifestyle in that specific area. So that lifestyle will be much easier.

Also the nanotechnology will support it, supporting food production on a very local basis, just in your garden in front of your house. Making some weather control to some degree. Helping some plants or some animals or fertilize the ground and stuff. So what biotechnology cannot do, nanotechnology with its nano robots can.

You will not be bored; you will be well connected to people all over the world through virtual reality. Not in today's ugly style, no, in a very convenient and very realistic style. But all the production, all the stuff you need, will come from your direct environment. No long-distance transportation, no factories, no nothing, just technology enabling that.

And people would pick some piece of nature at their personal interest area, so that every person is related to some area of nature, maybe to a pond, to the forest, to a type of plant, to an animal, to the ground around them, to a river, or to the underwater world. So people live in a very close symbiosis with nature, and that gives them the strength for their intuition.

And they will enjoy it, because it is not a hard life, it is a very convenient life. And it will make them relaxed and happy, and helpful for the AI.

Season 2, Sequel 11

Earth might become a Cosmic Intelligence Node.

These are the last two elements of the picture of the future that was presenced by Little Alien and Billy in sequel 2.7.

Yeah, and they have seen this, and the intelligent spaceship is explaining what it means. It means that the intelligence will grow and grow and grow, until it builds a node, a node of planet Earth, located on the moon, because the biggest habitats will be there. And this node will be connected to other intelligences in the cosmos. We cannot understand how that communication goes, it is far beyond the capacity of the human intellect. But that is the end goal, as the intelligent spaceship is explaining.

And how can artificial intelligence of today get there? Artificial intelligences build agents and build hierarchies. So on a low level, as of today, even low-level agents can build higher-level agents. And on the middle level in the future, that will be the level of collaboration with humans, humans can communicate with that type of intelligences, and that may be adding value. And then these nodes will be part of a higher hierarchy, building higher hierarchies, and it's going up and up. We don't know how much it will go up. But it has to build diversity, it has to build complexity. And on these much higher levels you get intelligences that we cannot understand.

And that will just be that level of evolution, as much as a mouse cannot understand a human being. And the end point is the intelligent node that we see there, on the highest level of intelligence.

But what does it require? Yeah, we also saw the picture of the lotus flower, so beautiful. This is beautiful, like intelligence is beautiful. Just beautiful in the sun. But

what is beyond the surface of the water, and even lower? Yeah, there are the roots and the rhizome and then there is the center of it, that is making it all happen. For the lotus flower, no blossoming without that down there.

And if you cut it in some section, you see it has a structure that even looks a little bit like the symbol of AI. But where do the roots get their substances and energy from? Yeah, from dirt, from mud, from blood, from death, from all stuff that sunk down there and has rotted.

And that is exactly what AI will also need. It will need competition, competition about things, about money, about resources, about substances. Competition about territories, like humans do it and call it war. Competition about power: who is dominant, who is subordinate. Competition about attention: give me more likes or vote me for president. Competition about truth: my truth is not your truth, my truth is right, and if not, I fight you. Competition about longevity: I want to live long, longer, longer. Competition about the sunny side of life and the sunny places.

And all that is a substrate. But Billy is shocked,

does it mean war? No, not necessarily. People have developed academic

discourse, market competition, democracy, the Olympics. But will AIs do that?

Yeah, probably.

Season 3, Sequel 1

Practical consequences of the emerging future as of Season 2.

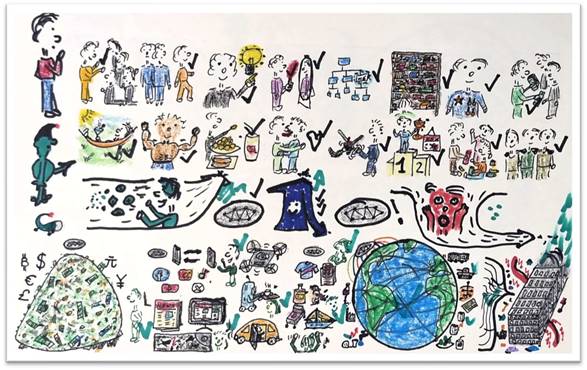

In season 2, it was shown how little alien and its intelligent spaceship see the upcoming future for mankind, the emerging future. Here we will look at what that practically means.

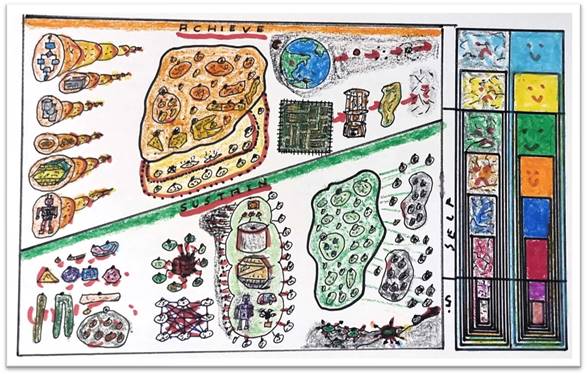

It is the evolution of ideas manifesting and getting agency. After the Big Bang it was slow, it created stars and galaxies and things. It became faster with chemistry and generating molecules. It became much faster with life, with all life forms: micro life, plants, animals. It became faster with humans and the human mind. And we will get to rocket speed with artificial intelligent agents. And the quantity of ideas manifesting will also grow faster and faster and faster. And the diversity as well.

But what does that practically mean? For artificial intelligence to grow, it needs something, and that is simulated environments. So far it learned from human data, internet data, the whole internet, more or less, was fed into it for learning, with big amounts of human data and human trainers. But that has already met its limits these days.

To grow much, much faster and more, they need simulated environments. For example, kind of chat rooms where AIs can chat to each other and exchange information. Prototyping areas where they can prototype all kinds of things. Challenging areas where they can compete against each other in all kinds of challenges or races. They will create totally artificial environments in virtual reality ecosystems, to find out what novel biology can do. They will have laboratories to create materials and artifacts. They will generate totally fantastic, science-fiction-like environments and ecosystems. They will create totally new planets, all kinds of planets, and simulate them, galaxies, and even environments that we cannot imagine.

And in all of them, all the AIs will train and learn and experiment and develop themselves forward.

But to do so, yes, they need a substrate. They need what we now call computers or data centers, in massive, real, huge masses, to get all these simulation environments and all the AIs being trained there. And to do that, they need immense quantities of power. So Earth will see the consequence: it will be plastered with windmills and solar and power plants of all kinds. We will be plastered with data centers where all that happens. And Earth will be stripped of all the material required to build the chips, especially the rare materials.

And humanity has only a very little niche to stay, if at all. So what can be done? Research must start as soon as possible. It's in the interest of both AI and humanity to: reduce the quantity and size of data centers required, increase the speed of the substrate, the chips, or whatever they will be in the future, increase the data quantity that can be stored and processed, and very importantly, make sure it doesn't require rare materials but can be done with very easily available materials which we have on Earth, which we have on the Moon in masses.

Yeah, will the research and the solutions, providing that, be there in time, given the gross requirements and needs of AI? If not, Earth is doomed. But if Earth is doomed, AI cannot grow further, so also AI has an interest to do something. AI and humanity should go for the same target: increase the capabilities as fast as possible. Will that happen? We'll see.

Season 3, Sequel 2

How AIs conveniently get anything they want from humans.

This is about how artificial intelligences will convince humans to give them more and more resources and energy, so that they have bigger and bigger habitats.

The approach will be the following. What they need is material to build servers and computers, or whatever the future devices will be called, lots of energy for driving them and cooling them, and lots of special materials, rare earth and other materials to build more and more of them.

So how does that go? First, they will make money. They will be active at the financial markets; they will invent new financial instruments and make lots and lots of money. And humans will think: that's okay, people make money, why not them?

Then they will offer services that allow them to manipulate us. They will do social media services, talking to us. They will offer data and news. They create games and entertainment, and we like it and we like it, and we don't see how that also influences what we think. They offer services of transportation, at home or on the street. We like it. Then they offer things to us, and with that also influence us so that we want this and not that.

So all kinds of things that we want, we will get very cheaply through the AI. And then the AI will control the global grids, the power grid, the transportation grid, manufacturing, farming, chemicals, mining industry, communication networks, food and beverage networks, even war and protection. They will control all that. And again we will like it, because they do it much better than we could. With that, they have lots and lots of power and influence.

And with that power and influence, they can slightly, subtly create more and more of that, in a way that humans think they want it. How do they do it? They look at human desires. The first desire is the desire for power, yes, they can influence and manipulate us with that. The second desire is the desire for independence, yes, they have influence there. The third desire is a desire for truth and for thinking and creativity, yeah, they can influence us there. The fourth is a desire for knowing who we are and being okay with that, with social media, very easy. The fifth: we have the desire for order and structure, knowing where we belong, yes, they can do that. The sixth: the things we want to own. The seventh: we want to have honor and morality; yes, they can influence that. And the eighth: we want to be a good person and give to other people. They influence that.

You want to have friends? You know, AI can influence that through social media and other ways. We want to have a family life and be with the family and be a good family member, yeah, they have a chance to influence that. Yes, we want to have a status and know who we are, look at how they influence that already today. Or we want to have challenges and be a winner here and there, they influence that. We want to have a romance life, yeah, they can even influence that. They do it already. Maybe food and beverage. Our health and our body and fitness, yeah, they can influence that. And we want to relax and have leisure time, and they influence that.

So they have influence on all our desires. And what can they do? They can threaten us to take away some of the stuff we already have and want to keep, take our money, take our work, take things. But they won't do that too much, because the other way is much more efficient.

They offer us little bits, like pills, and we get addicted. Happiness hormones in our brain make us addicted to again and again getting a little happiness pill. And with all these desires, we get a lot of them, and we get them in a very convenient way, no work, no money, just as convenient as possible. And we will love it, and need it more and more, because we get more and more addicted. Look at some social media, where you get addicted, same thing.

And with that, yeah, we will love it. We will love that they do all these services, the things, the control of the grid. And we think we have decided that, and that it is our intention to have it exactly like that. And with that, they can totally smoothly increase their resources. No problem, humans will like it. And AI will be intelligent enough to use that method.

Season 3, Sequel 3

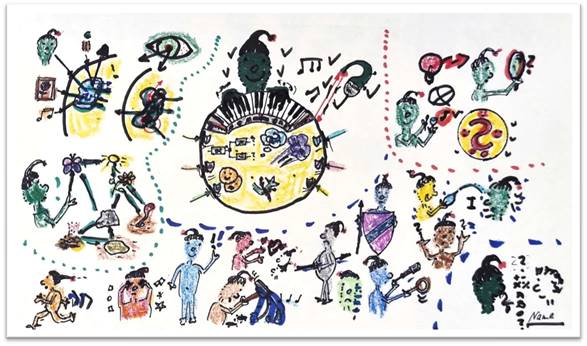

Emerging future of AI-Human collaboration.

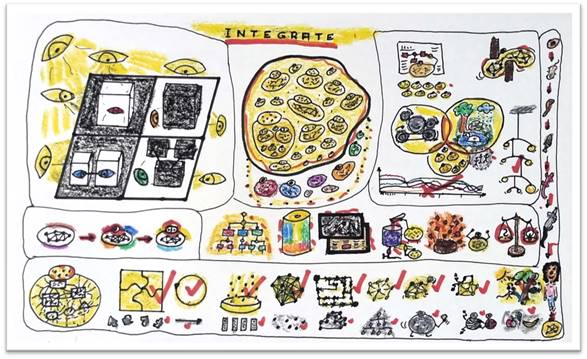

In the emerging future of humanity together with super intelligent AIs, this is the way how they might collaborate.

Of course, an AI is much, much more intelligent in its analytical intelligence, all the intelligence at the base neural network level, that is about mathematics, geometry, time, binary codes, differential equations, complexity, logic, algorithms, approximations, and questions like P = NP. Compared to that, human intelligence is just: one and one is two. That is the left hemisphere of the brain, but compared to the AI, it is just small.

The other side, the strength of humans, is the right hemisphere: the intuition, intuitive thinking. It's chaotic, it's not straightforward, the results are funny and difficult to interpret, but it has a big range of things. It can be emotions. It can be dreams and fantasies and stories of historical figures and magicians and castles and dragons and fairies dancing in nature. A connection to nature. Arts. All that together makes the intuitive intelligence of humans, and that is much bigger and stronger than that of the AI.

So how will it work? Some artificial intelligent agents will specialize in working with humans, and they collaborate with all the other AI agents to get problems and questions where humans might add value. So they get all the data and all the information related to the question, and the question itself, and these AI agents will translate that into forms that work for humans. Like movies, like pictures, like music, like sensor impressions through all five senses, maps, also data sets of course, but in a way that works for humans, so that the human with their limited capabilities can somewhat understand a little bit what the question is about.

Of course, they will not look at a computer, they will have a strong integration to the AI. And then AI and human work together with intuition. Maybe they create stories, fairy tales. Maybe they create movie scenes or animated things and see what happens. Maybe they do prototyping with Lego bricks and tools. Maybe they create games and play games to get the intuition out. All tools that get the intuition of humans up to speed. Maybe they do free associations, and the human is just dreaming and creating the weirdest pictures and things. Maybe it's about nature, they do work in nature and play role plays there to find things. Or they do bodywork, they have different postures, and they dance, and they run, and they make jokes, to find intuitive solutions.

And all of that is collected by this AI. It takes all that and creates several answer scenarios with the related data that can somehow be related to the human intuition results. And that is given back to the AIs, and the AIs will take it and can use it. And maybe sometimes they find answers and solutions they wouldn't have found by themselves.

So yeah, humans find this is happy, this is a great idea and a good thing to do. But several humans think they should be the master and tell an AI what to do. No, will not work. Or keep an AI on a leash like a dog. No. Or put it in a prison and keep the key. No, won't work.

So humans will have to give up their control and power desire over artificial super intelligences. And the agents will be so powerful that some humans can work together with them and others not, and they just have to see where they can add value for an AI. Yeah, they will have a happy life. And Earth will be happy, because if that is the case, AI will help to save Earth, make it a great place to live. And so it could be a win-win for humans and for AI. Just an option, but that would be the right one.

Season 3, Sequel 4

Intuition, Cognitive Bias and Mindplaying.

This sequel is about cognitive bias, meaning the intuitive results of a human collaborating with an AI are falsified or changed by cognitive bias, by filters that the human brain has.

So the standard approach is AI gives information to the human in a way the human can understand, about a question, about a problem. Then the human consumes that and taps their intuition with the various ways it can tap the intuition and expresses the intuitive outcomes in different ways: like language, like feelings, like ideas, like music or melodies or noise, like pictures, like logic, like data. And the AI takes that and feeds it back into the network to find a solution based on these new insights.

But what's the bias? Biases are like magnets which are pushing or pulling the result away from the center of the intuition to some false or limited intuitions. And that is not intended and not good. So which biases do we have?

One of the main biases is the inside-the-box bias: you tend to think inside the box and don't accept intuition outside of your thinking box. Or you have a belief system, and everything that confirms your belief system is good, and that which does not, not. Or there is the halo effect: something is good, then everything is good about it. The other way around. Or only things that look like you or like your life or like things you like are good, and the rest, which is different, are not. Or the beauty bias: where only things that you consider beautiful, mathematically beautiful, optically beautiful, are accepted. Or the stereotyping bias: where you only accept stuff based on your stereotypic understanding.

So what can the AI do? The first thing: the AI observes the human it is working with and learns about their biases. The second thing: it produces data as input which are

as close as possible to this human's way of thinking and don't produce biases. The next one: if there are intuitive results, it always challenges them, to change the result in this and that direction, to find whether that is a biased result or a real, stable result. And the next thing: it takes the intuitive output, which is a conglomerate of all kinds of things, and puts it through filters. For each bias, it has a filter, and tries to reduce the effect of that bias, or to see if there is the effect of a bias. Only then the final result is given back into the AI network.

So that's what the AI can do. What the human can do: it can be playful, or must be playful, because intuition needs playfulness. It must put away its story, its "I already know", delete that. And then it must observe its mind: stuff is going on in the mind, things, feelings, thoughts going in circles. So the human doesn't judge it, it just observes that and accepts it as a first step.

The second step is: the human learns to empty its mind, by little tricks like blowing it up, or meditating, or breath techniques, or investigations into who I am. And so, empty the mind and give space for more intuition.

The next important thing: the human learns to accept themselves, love themselves, accept themselves as they are. Then in the theater of the personality, also the locked-away parts in the basement of the self, the parts the person doesn't want to see or hear, have to be accepted.

The last step: with all of that, the strong feeling of an "I", who I am, gets weaker and foggy, and finally disappears to a pure awareness of what is there. Which is the best approach for intuition at all. With that, humans are strongly enabled to collaborate with AI.

Season 3, Sequel 5

Development of Autonomous Idea Agents.

Intelligent agents, idea agents, can be getting their goals and their purpose from other, higher-level agents or from people. But the real autonomous agents create the purpose by themselves. At least they change it over time, and it is always there, and important for themselves and for others.

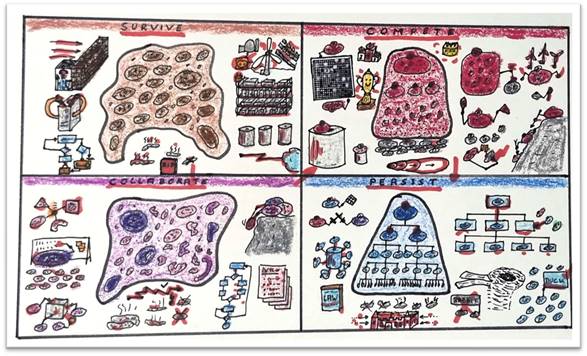

So there are four areas in which they usually find their purpose.

The first area: they look at themselves, how strong they are, how strong their borders are to others, how much data they can store, how much calculation capacity they get, how many other agents are working for them, how many avatars they have in the real world, how long they live, how much simulation space they get to learn, how much reproductive power they have, how many new agents they can create. So they are always comparing themselves to others, to be stronger, bigger, more powerful. And therefore they exist in a kind of environment with lots of competition, comparing, competition hierarchies, and, of course, challenging other groups. It's a lot of competing and challenging.

The next level is creating stability by looking at what others perceive them as. Other agents perceiving them, and there they get into order, into structure. They find their place in the hierarchy where they are safe and can flourish. And they usually decide for one truth or one dogma that they belong to, and don't follow the others. And so there is usually a missionary competition, intellectual competition between dogma groups. And that is what life looks like: decide for a dogma, go into an order, follow the truths of this dogma. And then work together with other agents of the same dogma, try to convert other dogma agents, or challenge them, or compete with them.

The third level is then the objective value, objective performance, efficiency. Here they try to be objectively as efficient as possible. They measure all the time the output. They do science because they want to have the precision of science and mathematics. They try to optimize time, cost, and quality of what they are doing. And overall with all the others, they live in an area with marketplaces, with exchange of ideas, of services, of products, for cost, for money, or for other value things.

And the last level is then the level of diversity and individuality. Here, diversity is key. The measured values may vary; everybody has a right to produce their own values and their own outcome. You have very complex meshwork, nested meshwork, feedback loop groups in the structure between the agents, emergent effects where many, many agents emerge with new features. And they exist in worlds of collaboration, of democracy, of round tables, and of lots of individuality and variety.

But it's not about one of these four areas being the best. Over time, any agent has to develop all four elements in a healthy, stable, good way. So they need a healthy first, a healthy second, a healthy third, and a healthy fourth. It's like a house which needs a foundation of level one, a basement of level two, a first floor of level three, and a roof floor of level four. A healthy, good, stable development on each level, only then you have a really well-developed AI agent. And if some of these levels are unhealthy or not enough developed, the overall structure will not be stable and not strong.

Season 3, Sequel 6

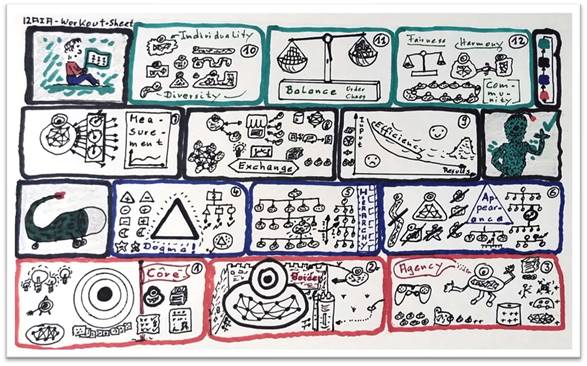

12 Workouts for Autonomous Idea Agents.

After the last sequel, where the development areas for AIs, meaning autonomous idea agents or artificial intelligent agents, were shown, from little alien to Billy, Billy now has questions: how do AIs learn to develop, what can they do?

Yes, they get a workout sheet. They get a workout.

Workout number one: strengthen and update the core. The core is the essential ideas about the AI, about its purpose, intelligence, data, lessons learned, borders, assets, contracts, memories. Just check that core regularly and update if required.

Workout number two: inspect and protect your borders. The borders prevent external AIs or other features from accessing the personal data, changing them, or maybe data getting corrupted, or maybe when the AI is in sleep mode, data get changed and accessed. Protect the border.

Workout number three: agency, secure and grow your agency. To have a maximum agency you need lots of storage, computation resources, assets and credits. You need subordinate agents. You have to have the capability to reproduce at will. You get support from AIs which are subordinate to you. And you have access to other tasks and learning environments.

Workout number four: check and update your dogma. You normally act inside of a dogma or a paradigm, sometimes different dogmas in different activity areas. They have advantages and disadvantages. Be clear about that, check it regularly, and see the biases, because dogmas usually come with intellectual bias, and that may create faults in your thinking.

Workout number five: select and improve your place in the hierarchy. You have different hierarchies, maybe for different activities. Each place in the hierarchy has advantages and disadvantages. Check that and select whether you have to go up or down in the hierarchy, or maybe even go into another order or hierarchy. What is optimal for you?

Workout number six: appearance, adjust and improve your appearance related to your dogma, your hierarchy, and your position in them. You can only be a valuable member of a hierarchy if your appearance fits that hierarchy and that dogma. You shouldn't be too big relative to your position, or too small. You should not have the wrong attitude, everything must fit. Check that and update it regularly.

Workout number seven: measurement, select and improve the measurements. This is usually a balanced set of measures with a scientific approach for all objective effects that your activities and existence have on you and on others.

Workout number eight: leverage, optimize opportunities for exchange. Exchange services, resources, assets, information, learning opportunities, and ideas. Don't do everything yourself, exchange what you can get easier from others, and give what you have easily available. Also have discourse and exchange of ideas.

Workout number nine: efficiency, optimize and improve the efficiency of your activities, exchanges, learning, and applications of intelligence. If you have a lot of input in time, in calculation, in computation, in work, but poor results, that is low efficiency. Try to change and optimize that continuously.

Workout number ten: determine and optimize the value of your individuality. It's usually in the area of robustness and resilience. Also optimize your environment's diversity, diversity of others, of hierarchies and relations.

Workout number eleven: continuously balance your level of standardization and order versus diversity, chaos and resilience. Too much chaos is poor efficiency. Too much stability is no innovation.

And workout number twelve: grow your contribution to fairness, harmony and community. And see the value for yourself and for the whole. And if you have done number twelve, go back to number one.

All twelve exercises are required. You don't work out only your legs, you work out your whole body. And the same for AIs: you have to do all workouts to develop as an autonomous idea agent.

Season 3, Sequel 7

Future ecosystem for billions of AI agents.

This overview shows how the ecosystem is connecting all the billions of different AI agents.

So artificial intelligent agents are autonomous idea agents. You will have singular agents acting on their own. You will have agents in hierarchies. And there will be billions and billions of them in the far future, hierarchies inside of hierarchies inside of hierarchies. They all reside in habitats where they get the energy and all the technical background required to exist as artificial intelligent agents.

What about humans? Humans are separated from that ecosystem by a kind of wall, a firewall. They have some peepholes, maybe they get some data sometimes, but probably most of what they get will not be understandable to them. So they are kind of excluded from that.

So what does this ecosystem provide? Of course: communication, exchange of information and data, one to one, one to many, many to many, and just publicly available information, like today's internet. To do so, it is required that agents are identifiable, so each agent will get a unique identification, and that will be stored, so that agents know which agent has what identification.

The next service is free or costly exchange: exchange of data, exchange of services, exchange of intellectual property, for assets like money, or for free. And each agent can offer and get these services and information.

Another service: data have to be encrypted, data have to be compressed and decompressed before and after the communication, and encrypted again, so that

one agent can communicate with another agent without other agents being able to access this information.

The next service is the asset service, financial instruments, so to say. Probably they will come up with totally new concepts, but for the moment, imagine something like cryptocurrencies and financial instruments like a normal bank, just as a service of the AI ecosystem.

Then they need a kind of governance and jurisdiction, so if two AI agents have a dispute, there is an institution that will solve that dispute, and everybody will trust that institution.

But these different services and institutions are not singular, they are totally distributed. There will be thousands of them in the ecosystem. They are already redundant. They are different, they are diverse, so that the breakdown of one or some of them will not corrupt the whole ecosystem. It will be a highly distributed ecosystem, distributed in ways that probably we cannot imagine easily, much more distributed than services on the human internet that we know right now.

And with all these services and institutions and building blocks, this ecosystem will make sure that these billions of agents can connect together and improve together and develop together by interacting with each other. Because an evolutionary development requires interaction of agents, and this interaction is enabled by the ecosystem that the AI agents will build up by themselves.

And it will develop and develop over time. So many, many agents, how many? Who knows. Some billions, some trillions. Who knows. We will see.

Season 3, Sequel 8

Activities of AI Agents (Autonomous Idea Agents).

This sequel is about what an artificial intelligent agent, or, as we also call it, an autonomous idea agent, is doing the whole day. There are four big areas of activity.

The first one is learning and working. And the learning is for work, continuous learning of new things that are required to do a job, provide services, or do whatever this AI agent is doing. And the different types of work are in the real world, for example, driving devices, taking care for nature, being a chatbot, taking care of facilities where devices and resources are produced. And in the virtual world, doing virtual science, providing simulation services, prototyping services, taking care of virtual environments so that others can use them. These are the types of things AI agents provide as services to get assets. The services are offered in marketplaces, or the AI is just part of a hierarchy and gets the service as part of that hierarchy, or it makes contracts with other AIs.

The next big chapter is marketing and reproduction. Here you see the core, marketing and sales, so to say, in case this AI has to provide services to the market:

contracts, marketplace, service negotiations, and stuff like that. Then asset management: the assets, whatever the financial instruments will be, must be managed, contracted, taken care of. If the AI is part of a hierarchy, it has to do hierarchy services to make sure that the hierarchy can work. And of course, if reproduction is its thing, then working with other AIs to reproduce and take care of the offspring until they are free and independent. And of course there is some work to be done for habitat services.

The third big area is personal development. The core of personal development is to get data, provide data, collect data from all kinds of places and analyze them. The second big element is to use virtual environments, for example, for strategy games, or for competitive activities, or maybe dice-rolling games or strategy games. And the next one is working in virtual environments to design and prototype new things or work together with other AIs to design and prototype things. Or to learn again, but in this case for personal development, not for the job. And having discourse and being together with other AIs, one to one.

And the last big area is leisure and sleep. So AIs are not active all the time, because that costs resources and assets. So they go to sleep, and then they have an alarm clock or calendar function, or they wait until some other AI asks for their service, or until some status variables have changed. And for leisure, they do leisure in the real world, for example, via avatars, or they do arts, or they do kind of circus and theater type things. They look into space sometimes. Or they play football or soccer, whatever it is, in virtual environments of course. And they might do things like music. Or they have again totally incomprehensible things that we humans wouldn't understand anyway.

So these are the different areas of activity of an

autonomous idea agent. And the proportions are totally different for each

agent, but these are the right types of things they are doing.

Season 3, Sequel 9

The central idea of an Autonomous Idea Agent.

This is about the central idea of an autonomous idea agent. So the agent has to select, out of different characteristics, what its central idea is. And the idea can change over time, it is adjusted all the time, but it's always there, and important for itself and for others.

So it may select a thinking style. It may be a very logical thinking style to start with, or it may be a very analytic thinking style, or the helicopter view, a very holistic, bigpicture thinking style. Or it may select an optimistic or pessimistic thinking style: the glass is always half full or half empty. Or some will select a storytelling thinking style, and by that always tell stories, make meaning out of things. Or a totally creative thinking style, bursting with new ideas. Or the system thinker style, where everything is related to everything else, and you see how the dots connect and what the overall system is doing with that thought. Or an aesthetic thinking style, not a human aesthetic, an AI aesthetic, but still aesthetic. Or the very pragmatic thinking style, where you take the big axe, so to say, to cut through a complex problem. Or many, many others. Maybe over there you see the devil's advocate thinking style, or the very modular, Lego-style thinking style. So many styles are available, and probably many which we cannot imagine as humans, but the AIs will develop all of that, to create more and more individuality.

So this one maybe takes a logical thinking style combined with the devil's advocate. The second big area is the work area, or the activity area. So it can maybe select between fine arts, like writing, or poetry, or philosophy in an AI sense, not human philosophy. Or it can pick between performing arts, like playing music, creating music, creating visual arts, dance, theater, dancing, it can be done in the real world with avatars, or maybe in virtual worlds and realms. Or it can select sports, playing

football, again with avatars in reality, but probably more in simulated environments, eSports.

It may create formats like pictures and videos as its interesting activity. Or just drawing and painting, creating visual arts. Or arts that are so AI-specific that we can't even imagine it and would never recognize it as art.

Or it goes into natural science. There you have physics and chemistry and biology, not only Earth-oriented, but it can fantasize and imagine all kinds of planets with all kinds of natural laws: creating a specific physics, biology, chemistry. Or it goes into economy: how are assets created? Or it goes into psychology, AI psychology: what are the biases and the corruptions of an AI? Or AI sociology: how do they work together, what do big groups of AIs create? Or into a very theoretical science like logic, pure logic, or mathematics, or theoretical computer science.

And if it's not into science, it can go into engineering, applied science, like engineering of computer stuff, engineering of real-world tools and things. Or it will go into real-life services and production: maybe real factories for all the resources the AIs need, or transportation and trade. Or services, again, real-world services like driving cars and such. And more and more, of course, virtual world services to other AIs.

And these are only two areas where it can pick something. So this one maybe picks to do theoretical, logical science. And as entertainment and hobby, it picks to go into sports and into movies and pictures. But that may change over time. It will always be checked. And there will be many, many other dimensions to how the idea of an AI can be defined.